What Makes AI-Generated Ads Believable Enough To Perform?

The Conversation Around AI Ads Has Changed

Over the last couple of weeks, our team has spent a lot of time inside Kreator testing AI-generated UGC ads, comparing models, experimenting with prompts, extending scenes, and analyzing outputs frame by frame while trying to understand where this category of AI advertising is heading now that the quality has improved so quickly that even people deep in the space occasionally stop and ask, “Wait… are we su

What made those discussions interesting was that almost nobody on the team was talking about AI video the way people talked about it even a year ago..

Earlier AI-generated videos usually failed immediately and obviously, the faces were too smooth, the eyes moved strangely, the lip sync broke constantly, the lighting looked artificial, and the movement had this strange robotic stiffness that instantly triggered something in your brain that told you the entire thing was fake before the video had even reached the three-second mark. But after spending time testing newer workflows and models inside Kreator, especially while generating creator-style UGC ads, we realized that visual realism is no longer the main thing holding these ads back.

The Uncanny Valley Is Moving

The uncanny valley is moving.

The problem now is much more subtle, and honestly much more fascinating from a marketing perspective. Viewers are no longer primarily judging whether something visually looks real, they are subconsciously judging whether it behaves real, which is a completely different challenge altogether and one that starts pulling the conversation away from rendering quality and into psychology, cinematography, pacing, human behavior, environmental realism, and all the tiny imperfections that people naturally associate with authenticity.

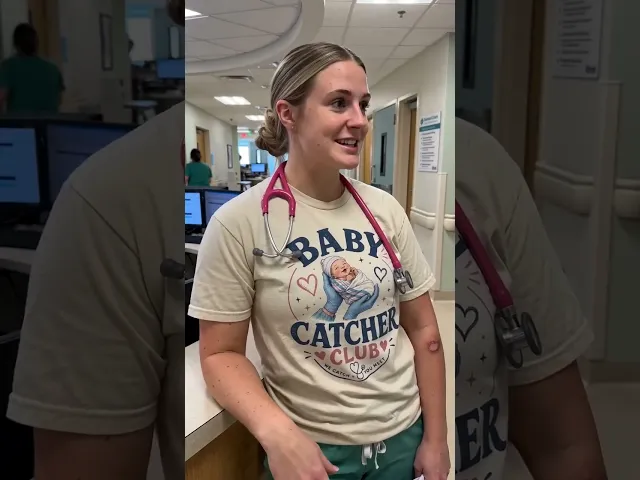

One of the biggest internal talking points from our testing ended up being a scar on a nurse’s arm.

Not the facial rendering.

Not the lip sync.

Not the lighting.

A scar.

Because when that detail appeared in one of the generated videos, the entire character suddenly felt more believable in a way that was difficult to explain at first but instantly obvious to everyone watching it. The more we discussed it internally, the more we realized that the scar represented something much bigger about where AI-generated advertising is going. The detail itself wasn’t important because it was visually impressive, it was important because it created implied-life experience, subtle imperfection, and subconscious authenticity, all of which made the character feel less like a generated avatar and more like a real person who existed before the video started.

The Details That Changed The Outputs

That realization started changing the way we approached prompting entirely.

Instead of trying to create “perfect” people, the prompts started becoming much more observational and human, almost like describing someone you actually noticed in real life rather than describing a polished marketing character.

The team began adding details like wrinkles in shirts, freckles, uneven skin texture, smudges on glasses, flyaway hairs, slight motion blur during hand gestures, ambient room noise, imperfect kitchen backgrounds, handheld chest-level camera framing, inconsistent lighting, and natural conversational pacing with pauses and interruptions.

What was surprising was how dramatically these small details improved believability compared to highly polished outputs that technically looked cleaner but emotionally felt far more artificial.

A male character with messy hair and a less conventionally attractive appearance actually felt significantly more believable than another character that looked almost “too perfect”.

Does the future of AI-generated advertising actually depend less on photorealistic perfection and more on recreating the subtle imperfections our brains associate with real human presence?

That observation sounds small on the surface, but it has major implications for performance marketing. The goal of advertising has never been to create perfect people, it has always been to create trust, relatability, emotional familiarity, and enough authenticity for someone to stop scrolling long enough to actually listen.

Why Camera Behavior Matters More Than Rendering Quality

A lot of the realism improvements we saw during testing had surprisingly little to do with the avatar itself and much more to do with camera behavior, pacing, and environmental consistency. Some of the strongest outputs inside Kreator were not the cleanest or most cinematic, they were the ones that felt like real creator content filmed naturally on a phone.

The best generations consistently used handheld UGC-style framing with slight instability while walking through a kitchen, chest-level camera positioning, conversational pauses, and subtle switches between mounted and handheld angles, all of which immediately made the footage feel more believable because it mirrored how real creators actually record content on TikTok and Instagram.

One detail the team noticed repeatedly was how much realism improved when the camera behavior itself felt imperfect. Slight movement while talking, framing inconsistencies, quick posture changes, and uneven pacing created far more authenticity than perfectly stabilized shots, which often ended up feeling strangely artificial despite technically looking “better.”

Another surprisingly effective tactic was intentionally adding imperfections into prompts instead of polished studio scenes. Messy kitchens, wrinkles in shirts, smudged glasses, scars, freckles, and uneven lighting consistently made outputs feel more believable because real UGC content rarely looks polished in the first place.

What became obvious very quickly was that realism in AI advertising is no longer just visual realism.

It’s behavioral realism.

What We Learned About AI UGC Workflows Inside Kreator

The conversation inside serious marketing teams is no longer centered around whether AI-generated ads are possible. Instead, the focus has become operational, with teams discussing which models create believable creator-style authenticity consistently enough to scale real advertising workflows.

One of the clearest insights from our testing was that Seedance 2.0 consistently produced the most believable talking-style UGC outputs, especially when the goal was natural movement, conversational pacing, and realistic lip sync. The biggest difference was not raw visual quality, it was behavioral consistency, because the characters moved less like AI avatars and more like actual people casually filming content on their phones.

More importantly, Seedance 2.0 often produced usable outputs in a single generation instead of requiring multiple retries, which became one of the most important workflow considerations during testing. Once you start thinking like a performance marketer instead of an AI hobbyist, one-shot success rates matter enormously because a more expensive model can still become operationally cheaper if it dramatically reduces retries and editing cycles.

The team also discovered that prompt specificity had a huge impact on realism quality. Generic prompts consistently produced weaker outputs, while highly observational prompts dramatically improved believability. Instead of:

“Photorealistic woman speaking in kitchen”

the strongest generations included details like:

flyaway hairs

wrinkles in clothing

smudged glasses

handheld chest-level framing

slight motion blur during gestures

natural pauses and interruptions

The more the prompts resembled observations about a real person instead of descriptions of a polished marketing character, the more believable the outputs became.

Example Prompt

The Bigger Shift Happening In Marketing

This may actually be the biggest shift happening underneath all of this.

Modern performance marketing rewards creative velocity more than ever before, which means teams need more concepts, more testing, more creators, more hooks, more iterations, and faster turnaround times than traditional production workflows can realistically support. This is especially true for brands competing in aggressive paid social environments where ad fatigue happens quickly and the cost of slow creative iteration compounds fast. AI-generated video is beginning to change those economics in a very real way, not because it replaces creativity, but because it dramatically reduces the friction between an idea and a testable execution.

But marketers are not simply looking for AI-generated ads that “look real.”

They need ads that actually perform without destroying trust, hurting brand quality, or making audiences instantly disengage the moment something feels synthetic or emotionally off. The realism conversation is really a performance conversation, because teams are trying to solve for viewer skepticism, authenticity, attention retention, conversion performance, and scalable content production all at the same time.

That’s why the conversation around AI advertising has evolved so quickly over the last year, and why the most interesting discussions are no longer about whether AI can generate realistic humans, but whether AI can generate believable moments consistently enough to actually work in real advertising environments where trust, attention, and authenticity determine whether an ad succeeds or disappears into the endless scroll within seconds.